JIT Trace

torch.jit.trace使用eager model和一个dummy input作为输入,tracer会根据提供的model和input记录数据在模型中的流动过程,然后将整个模型转换为TorchScript module。看一个具体的例子:

我们使用BERT(Bidirectional Encoder Representations from Transformers)作为例子。

from transformers import BertTokenizer, BertModel

import numpy as np

import torch

from time import perf_counter

def timer(f,*args):

start = perf_counter()

f(*args)

return (1000 * (perf_counter() - start))

# 加载bert model

native_model = BertModel.from_pretrained("bert-base-uncased")

# huggingface的API中,使用torchscript=True参数可以直接加载TorchScript model

script_model = BertModel.from_pretrained("bert-base-uncased", torchscript=True)

script_tokenizer = BertTokenizer.from_pretrained('bert-base-uncased', torchscript=True)

# Tokenizing input text

text = "[CLS] Who was Jim Henson ? [SEP] Jim Henson was a puppeteer [SEP]"

tokenized_text = script_tokenizer.tokenize(text)

# Masking one of the input tokens

masked_index = 8

tokenized_text[masked_index] = '[MASK]'

indexed_tokens = script_tokenizer.convert_tokens_to_ids(tokenized_text)

segments_ids = [0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1]

# Creating a dummy input

tokens_tensor = torch.tensor([indexed_tokens])

segments_tensors = torch.tensor([segments_ids])

然后分别在CPU和GPU上测试eager mode的pytorch推理速度。

# 在CPU上测试eager model推理性能

native_model.eval()

np.mean([timer(native_model,tokens_tensor,segments_tensors) for _ in range(100)])

# 在GPU上测试eager model推理性能

native_model = native_model.cuda()

native_model.eval()

tokens_tensor_gpu = tokens_tensor.cuda()

segments_tensors_gpu = segments_tensors.cuda()

np.mean([timer(native_model,tokens_tensor_gpu,segments_tensors_gpu) for _ in range(100)])

再分别在CPU和GPU上测试script mode的TorchScript模型的推理速度

# 在CPU上测试TorchScript性能

traced_model = torch.jit.trace(script_model, [tokens_tensor, segments_tensors])

# 因模型的trace时,已经包含了.eval()的行为,因此不必再去显式调用model.eval()

np.mean([timer(traced_model,tokens_tensor,segments_tensors) for _ in range(100)])

# 在GPU上测试TorchScript的性能

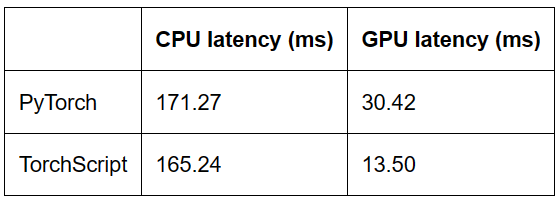

最终运行结果如表

我使用的硬件规格是google colab,cpu是Intel(R) Xeon(R) CPU @ 2.00GHz,GPU是Tesla T4。

从结果来看,在CPU上,TorchScript比pytorch eager快了3.5%,在GPU上,TorchScript比pytorch快了55.6%。

然后我们再用ResNet做一个测试。

import torchvision

import torch

from time import perf_counter

import numpy as np

def timer(f,*args):

start = perf_counter()

f(*args)

return (1000 * (perf_counter() - start))

# Pytorch cpu version

model_ft = torchvision.models.resnet18(pretrained=True)

model_ft.eval()

x_ft = torch.rand(1,3, 224,224)

print(f'pytorch cpu: {np.mean([timer(model_ft,x_ft) for _ in range(10)])}')

# Pytorch gpu version

model_ft_gpu = torchvision.models.resnet18(pretrained=True).cuda()

x_ft_gpu = x_ft.cuda()

model_ft_gpu.eval()

print(f'pytorch gpu: {np.mean([timer(model_ft_gpu,x_ft_gpu) for _ in range(10)])}')

# TorchScript cpu version

script_cell = torch.jit.script(model_ft, (x_ft))

print(f'torchscript cpu: {np.mean([timer(script_cell,x_ft) for _ in range(10)])}')

# TorchScript gpu version

script_cell_gpu = torch.jit.script(model_ft_gpu, (x_ft_gpu))

print(f'torchscript gpu: {np.mean([timer(script_cell_gpu,x_ft.cuda()) for _ in range(100)])}')

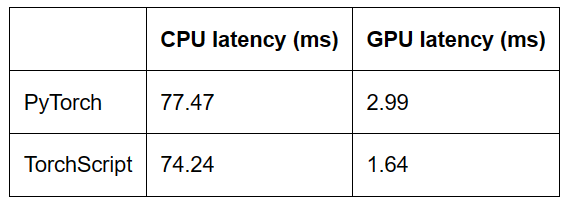

TorchScript相比PyTorch eager model,CPU性能提升4.2%,GPU性能提升45%。与Bert的结论一致。

-

cpu

+关注

关注

68文章

10854浏览量

211567 -

数据

+关注

关注

8文章

7002浏览量

88937 -

模型

+关注

关注

1文章

3226浏览量

48806

发布评论请先 登录

相关推荐

PSpice如何利用Model Editor建立模拟用的Model

IC设计基础:说说wire load model

Model B的几个PCB版本

Model3电机是什么

Cycle Model Studio 9.2版用户手册

性能全面升级的特斯拉Model S/Model X到来

Model Y车型类似Model3 但续航里程会低于Model3

TorchScript model与eager model的性能区别

TorchScript model与eager model的性能区别

评论